Action Prediction: e.g. Walking

Real-world POV Data: To Be Built with People, Not Just Algorithms

We are building a system to capture first-person human interaction data from real environments using wearable smart glasses.

Our smart-glasses platform is ready. The next step is deploying it with real contributors to begin collecting structured action data in everyday environments.

Upload a short POV video to see a sample 4-second window and the dataset-style JSON we generate from it.

Note: Model can make mistakes, we are actively improving our backend, and also server memory limits can reduce annotation quality.

Upload a video to generate a sample JSON output…

We sample 3 moments (1 second apart) from a random 4-second window.

Waiting for upload…

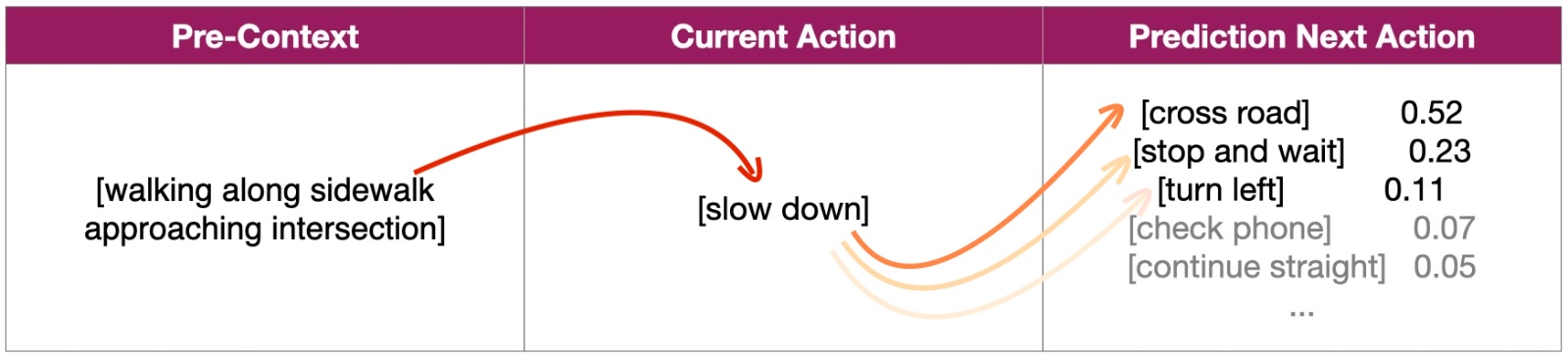

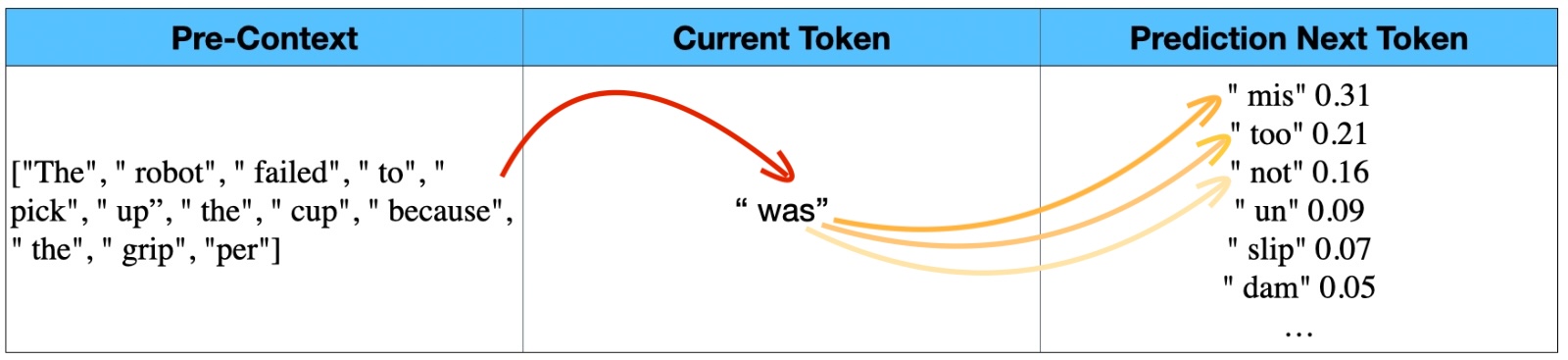

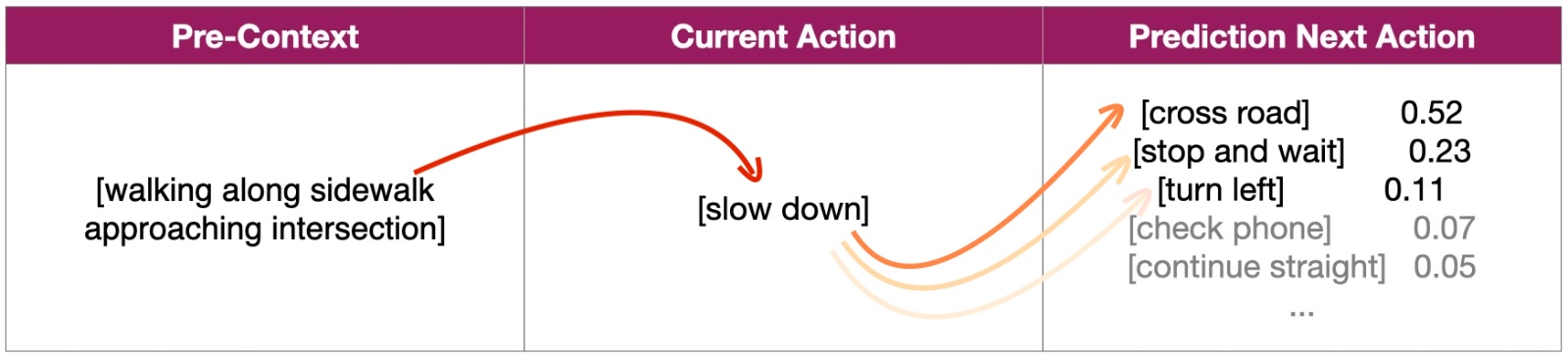

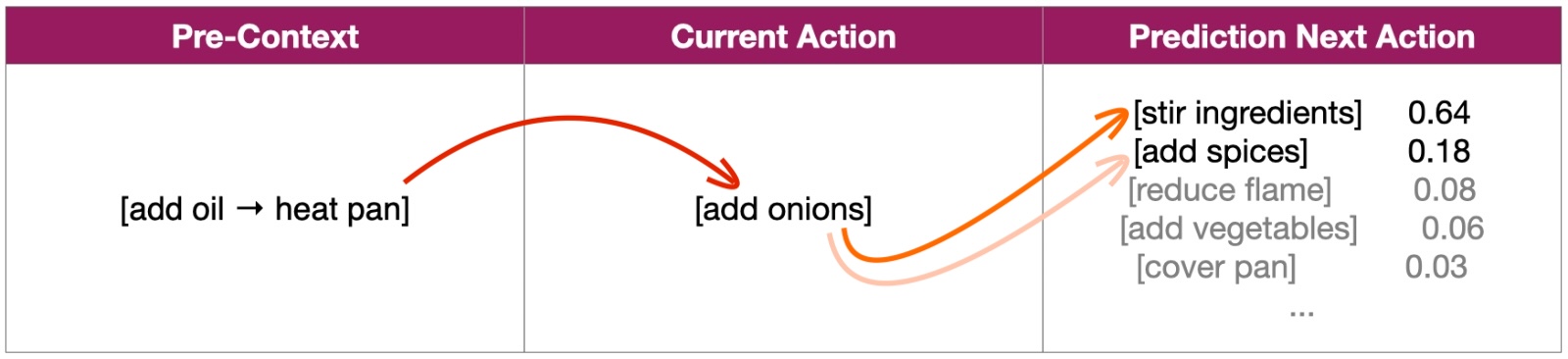

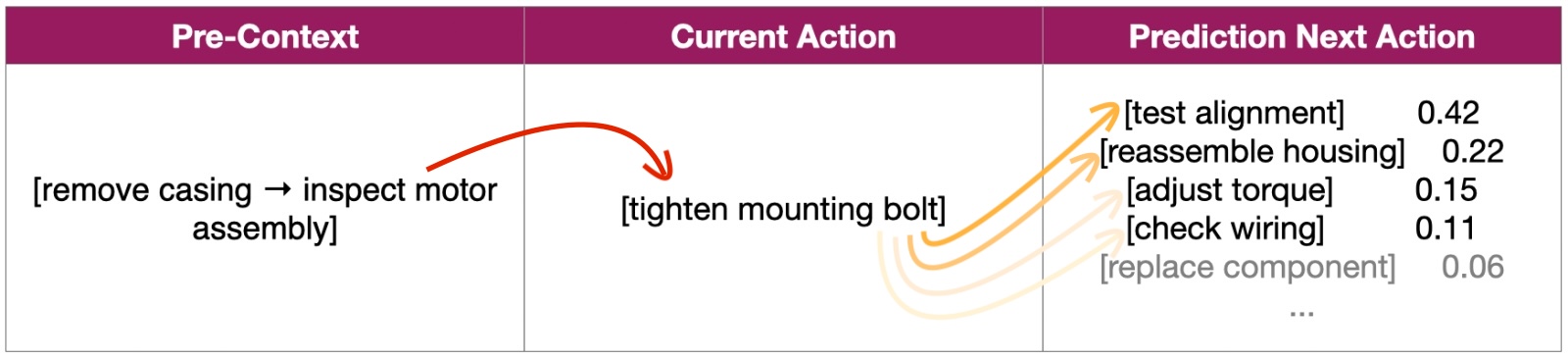

Language models predict the next token from context. Embodied AI systems must predict the next physical action. Real-world first-person data is critical to bridge that gap.

A helpful analogy, but physical action prediction is the real bottleneck.

Real-world first-person data is required to train models that understand physical actions the same way language models understand words.

Instead of lab environments, we plan to work with real contributors whose daily routines naturally include rich interaction with objects.

These environments create authentic interaction patterns robotics systems must eventually understand.

Daily-life environments contain long-tail behaviors that simulations and lab data miss.

This leads to data closer to real deployment conditions.

Data collection does not scale through uploads alone.

We support deployment through:

Result: a reliable, repeatable data pipeline.

Prototype → MVP → Product & CES showcase → Deployment

We are not starting from zero.

We have already developed wearable smart glasses enabling:

This allows faster execution than software-only approaches.

All captured footage is planned to pass through automated privacy filters before processing or annotation.

Privacy will be built into the infrastructure.